Meta's Muse Spark: What It Actually Means for Business

TL;DR

Meta just shipped Muse Spark. The branding is "personal superintelligence." The reality is more interesting than the slogan.

I've been building AI-powered tools for clients across Kuwait and the GCC for two years now. Every time a new model drops, I get the same question within 24 hours: "Should we be using this?" Usually from a founder who saw a LinkedIn post about it and got nervous they were missing out.

Here's the honest answer for Muse Spark — and more importantly, the framework I use to decide whether any new AI model is worth integrating into a client project.

What Muse Spark Actually Is (Minus the Marketing)

Strip "personal superintelligence" from the conversation for a second. Muse Spark is Meta's new flagship model, released April 2026 by the newly formed Meta Superintelligence Labs. It's a ground-up rebuild — not a fine-tune of Llama, not a quick follow-up. A new architecture with a new team behind it.

The capabilities that actually matter:

- Natively multimodal. Text, images, documents — processed together, not bolted on as an afterthought. Table stakes in 2026, but Meta's implementation is solid.

- Tool use. The model can call external APIs, query databases, trigger workflows. This is where business value lives.

- Visual chain of thought. It reasons through a photo step by step — useful for inspection, document analysis, anything where "looking at the thing" is the hard part of the job.

- Multi-agent orchestration. A "Contemplating mode" that spins up parallel agents to tackle harder problems. Think of it as Muse Spark arguing with itself until it lands on a better answer.

On benchmarks: 58% on Humanity's Last Exam in Contemplating mode. 38% on FrontierScience Research. It's competitive with GPT Pro and Gemini Deep Think on the extreme reasoning end — exactly where the frontier currently sits.

Available now at meta.ai. Private API preview for select developers.

That's the what. Now the so-what.

Why I'm Paying Attention (and You Should Too)

Here's the thing about AI releases in 2026 — most are noise. Incremental bumps on benchmarks nobody outside the lab actually cares about. Muse Spark is different for three reasons, and all three show up in client projects I touch weekly.

1. Multimodal Is Finally Ready for Production

For the longest time, "multimodal AI" meant "we added an image endpoint." It kind of worked. Kind of.

I burned two hours last quarter trying to get an older model to extract structured data from a photographed invoice in Arabic. Half the time, the numbers were wrong. The other half, the model hallucinated line items that weren't there. Ugly workaround territory — OCR pipeline, then cleanup script, then LLM pass, then validation layer. Four moving parts just to read a receipt.

Muse Spark and its peers are finally at the point where visual document processing is reliable enough to ship in one shot. That changes the math on a bunch of projects I see every month — invoice processing, ID verification, quality control on manufacturing lines, menu-to-order flows for restaurants. Problems that used to need bespoke OCR pipelines can now be handled by a well-prompted model call.

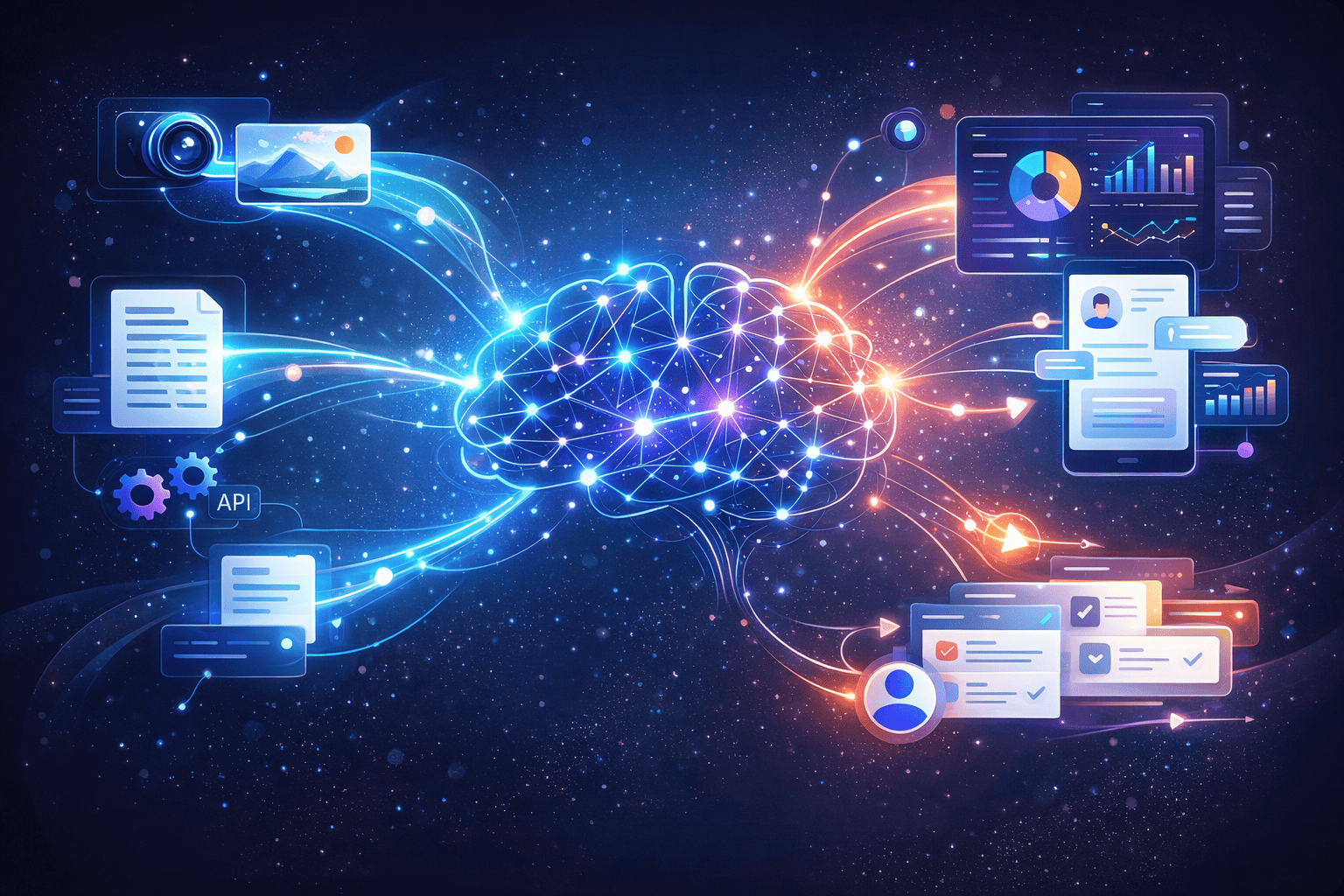

2. Tool Use Is Where Real Automation Lives

A model that can write poetry is cute. A model that can read an email, check inventory in your ERP, draft a response, and queue it for approval? That's software you can charge money for.

Tool use is the bridge between "AI demo" and "AI in production." We covered the broader shift in AI Agents vs Chatbots: Why 2026 Is the Year of Autonomous AI — Muse Spark pushes it further. And if you haven't read up on MCP servers, that's the protocol making this kind of integration clean instead of held together with string and hope.

3. Reasoning Models Reduce the Handholding Tax

Every AI system I've shipped has a "handholding tax" — the engineering effort required to catch the model when it makes dumb mistakes. Retries. Validation layers. Fallback prompts. Human-in-the-loop for edge cases.

Better reasoning means a smaller handholding tax. Not zero — never zero — but small enough that more use cases become economically viable. A system that needed 20% human review last year might only need 5% now. That's the difference between "AI project with ambiguous ROI" and "line item in next quarter's budget."

Where I'd Actually Use Muse Spark

Not everywhere. Let me be specific.

Good fits:

- Visual inspection and document processing (invoices, receipts, forms, IDs)

- Customer support where users send screenshots or photos

- Product recognition for ecommerce catalogs — especially marketplaces with user-uploaded listings

- Any workflow where the hardest step is "look at the image and decide something"

Bad fits:

- Pure text chatbots where Claude Sonnet or GPT-4o already work fine and cost less per call

- Ultra-low-latency applications (reasoning modes are slower by design — that's the tradeoff)

- Anything where data residency is non-negotiable and Meta's API terms don't clear legal

Here's my rule: if your problem is mostly text, you don't need Muse Spark. If your problem involves eyeballs — human eyeballs that currently have to look at things and make judgment calls — this is where multimodal reasoning models earn their keep.

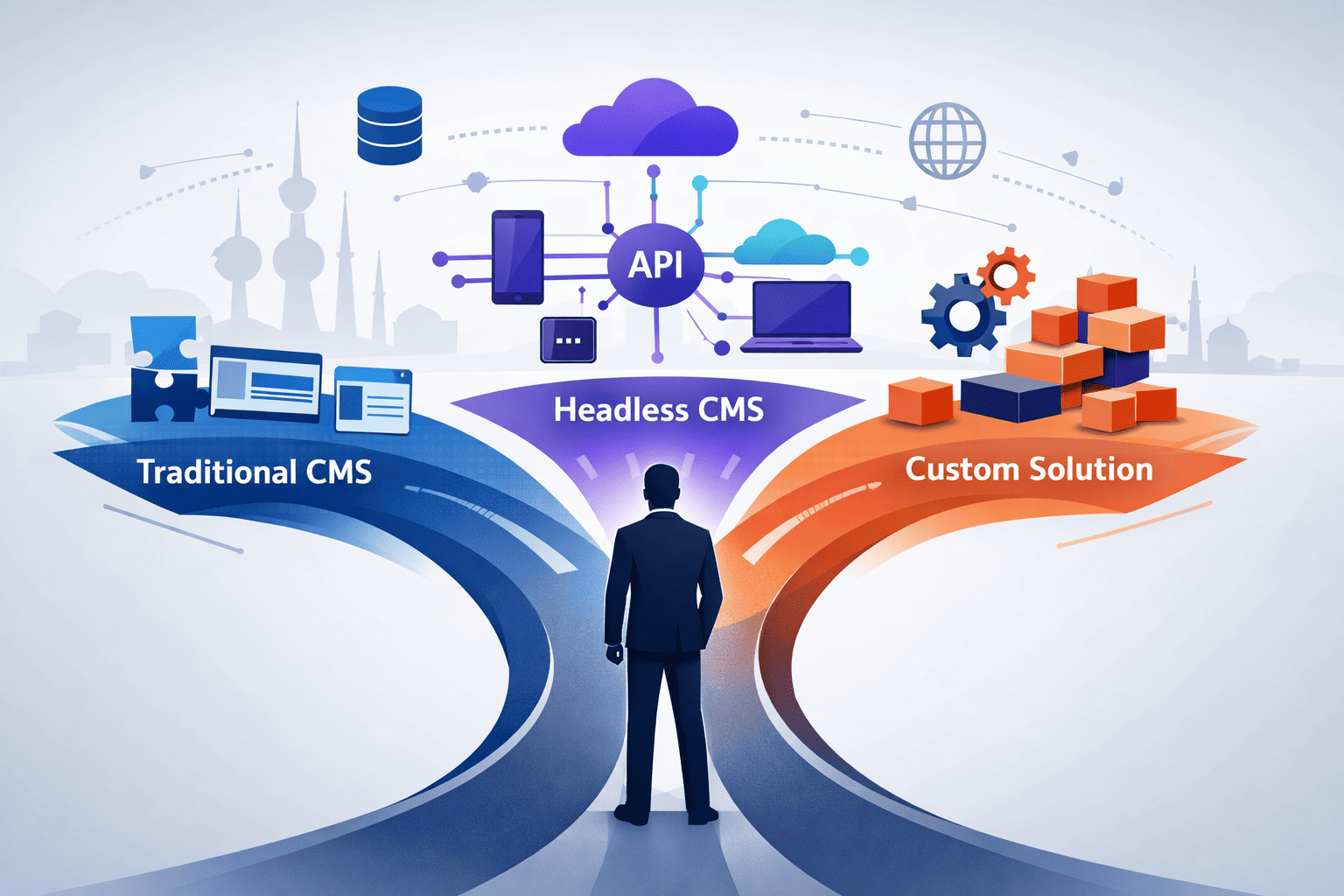

How We Pick AI Models for Client Projects

This question comes up in every single kickoff meeting. "Which AI should we use?"

My answer has nothing to do with brand loyalty. Here's the actual checklist we run at DSRPT:

- Start with the problem, not the model. What manual task is bleeding time or money? If you can't describe it in one sentence, you're not ready for AI yet.

- Match model capability to task complexity. Classification? Use something lightweight. Complex reasoning over visual data? Bring out the heavy machinery.

- Cost per call actually matters. A brilliant model at $0.30 per request will kill your unit economics if you're running 10,000 requests a day. Do the math before the demo.

- Build for swapping. The model you pick today won't be the best model in six months. Architect your system so you can swap providers without rewriting the world.

- Test on your data, not demo data. Every model looks great on the vendor's examples. I've lost count of how many times I've watched a "GPT-killer" choke on real client documents.

- Budget for reliability, not just intelligence. Smart is easy. Consistent is hard. Plan for the guardrails from day one.

I wrote about the wider decision process in How I Use AI as a Managing Partner — the same principles apply whether you're using AI yourself or shipping it to paying customers.

The Part Nobody Wants to Say

Most businesses asking about Muse Spark don't need Muse Spark. They need a straightforward integration of an existing model into a workflow they already understand. The bottleneck isn't model capability. It's clarity about what problem you're solving and discipline about measuring whether the AI is actually solving it.

I covered this trap in detail in The AI Illusion: Why Tools Alone Don't Make You Smarter. The post still stands. New model, same lesson. The shiny tool doesn't fix the thinking.

What to Do This Week

If Muse Spark (or any frontier model announcement) has you wondering whether you're behind, here's what I'd actually do:

→ Pick one manual process in your business that costs real money. One. Not five. → Write down exactly what a human does today, step by step, including the boring bits. → Ask: "If an AI could do this reliably, what would it save us per month?" → If the answer is more than around KD 600, you have a real AI project worth scoping. Talk to someone who builds these for a living. → If the answer is less, wait. Better models drop every quarter and the price keeps falling.

That's it. The Muse Spark release is a signal that the tools keep getting sharper. But the advantage doesn't go to whoever uses the newest model — it goes to whoever ships reliable AI systems into real workflows before their competitors do.

If you want to talk about where AI actually fits in your business — not the hype version, the honest version — let's have a conversation. We've been building this stuff long enough to tell you when to move and when to wait.

Frequently Asked Questions

What is Muse Spark and who made it?

Muse Spark is Meta's flagship AI model, released in April 2026 by the newly formed Meta Superintelligence Labs (MSL). It's a natively multimodal reasoning model with tool use, visual chain of thought, and multi-agent orchestration — called "Contemplating mode." It's available via meta.ai and a private API preview for select developers.

Should my business use Muse Spark?

It depends on the use case. Muse Spark is strongest at visual reasoning tasks — document processing, product recognition, and image-based workflows. For text-only applications, Claude or GPT-4o are usually more cost-effective. Pick the model based on the specific problem, not the brand. If your hardest bottleneck involves a human looking at images or scanned documents, Muse Spark is worth testing.

How does Muse Spark compare to GPT-4 and Claude?

On reasoning benchmarks, Muse Spark is competitive with GPT Pro and Gemini Deep Think — 58% on Humanity's Last Exam and 38% on FrontierScience Research in Contemplating mode. Its real advantage is visual chain of thought and multi-agent orchestration for complex multimodal tasks. For pure text applications, Claude and GPT-4o are still excellent choices.

What does "tool use" mean in AI models like Muse Spark?

Tool use means the AI model can interact with external systems — calling APIs, querying databases, triggering workflows, or reading files. It's what turns a chat demo into software that can actually complete tasks inside a business process. Tool use is the foundation of agentic AI and the single most important capability for production deployments.

How should a business get started with AI integration?

Start with a specific business problem, not a specific technology. Identify a manual process that costs real time or money, map the steps a human takes today, then ask whether AI could do it reliably. If the monthly savings justify the build cost, pilot it. If they don't, wait — models keep getting better and prices keep dropping.